Architecting the Guardrails: A Global Search for the Next Generation of AI Alignment Talent

As advanced AI systems move from experimental tools to agentic infrastructure, the “Safety Gap” has become the primary frontier of research. OpenAI has officially announced the launch of its Safety Fellowship, a premier pilot program designed to support independent researchers, engineers, and practitioners in developing high-impact alignment solutions.

This is a strategic call for those who prioritize technical rigor and empirical grounding over traditional credentials. The fellowship is an invitation to solve the most complex questions facing the future of artificial intelligence.

THE RESEARCH MISSION

The program focuses on safety and alignment questions that are critical for both current models and the frontier systems of tomorrow. OpenAI is prioritizing work that is technically strong and relevant to the broader global research community.

Priority Research Areas:

-

Safety Evaluation & Robustness: Stress-testing models against failure modes.

-

Scalable Mitigations: Developing safety measures that grow with model capability.

-

Agentic Oversight: Researching how to govern autonomous AI agents.

-

Privacy-Preserving Safety: Ensuring alignment without compromising data integrity.

-

High-Severity Misuse: Mitigating risks in domains like cybersecurity and bio-risk.

-

Ethics & HCI: Addressing human-computer interaction and systemic bias.

THE FELLOWSHIP STRUCTURE

This is a high-intensity, output-oriented program running from September 14, 2026, to February 5, 2027.

-

Mentorship & Peer Cohort: Fellows will work closely with OpenAI mentors and engage with an elite cohort of global safety researchers.

-

Hybrid Workspace: Access to dedicated workspace at Constellation in Berkeley, California, with the option for remote participation.

-

Research Output: Every fellow is expected to produce a substantial contribution—such as a peer-reviewed paper, a new safety benchmark, or an open-source dataset—by the program’s conclusion.

-

Resource Support: Includes a monthly stipend, API credits, and significant compute support to power your research.

ELIGIBILITY & SELECTION

OpenAI is looking for “Research Ability” over “Specific Credentials.” We welcome applicants from diverse backgrounds:

-

Fields: Computer Science, Social Science, Cybersecurity, Privacy, HCI, and related disciplines.

-

Criteria: Technical judgment and a proven track record of execution.

-

Requirements: Letters of reference are mandatory for all applicants.

KEY TIMELINES

-

Applications Close: May 3, 2026

-

Notification Date: July 25, 2026

-

Program Starts: September 14, 2026

HOW TO APPLY

If you are ready to contribute to the global safety framework of advanced AI, submit your research proposal and application via the official OpenAI portal:

🔗 APPLY HERE: OpenAI Safety Fellowship Portal

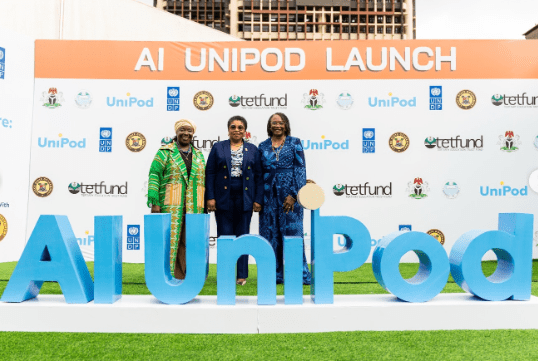

IndexPrima Note: As Africa architects its own AI Declaration, local researchers must be at the table where “Global Safety Standards” are written. This fellowship represents a critical opportunity for African technical talent to influence the alignment of systems that will eventually underpin our digital economy.